AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Units of entropy12/11/2023

The history of entropy begins with the work of French mathematician Lazare Carnot who in his 1803 paper Fundamental Principles of Equilibrium and Movement proposed that in any machine the accelerations and shocks of the moving parts all represent losses of moment of activity. History File:Clausius.jpg Rudolf Clausius - originator of the concept of "entropy". Īlthough the concept of entropy was originally a thermodynamic construct, it has been adapted in other fields of study, including information theory, psychodynamics, thermoeconomics, and evolution.

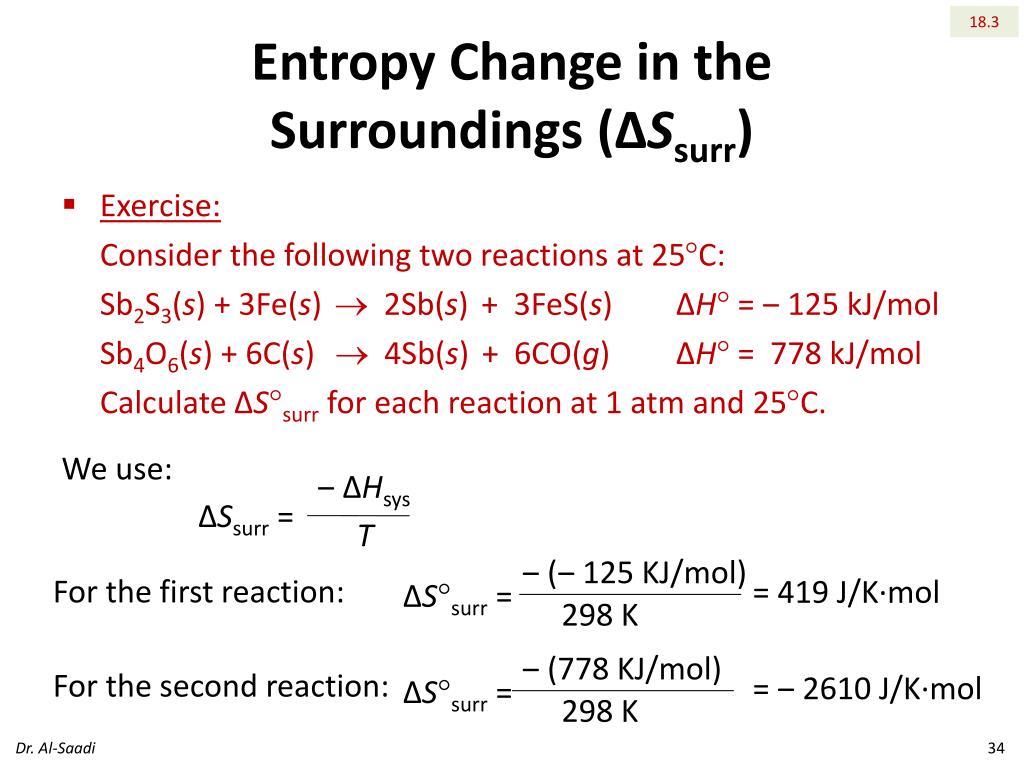

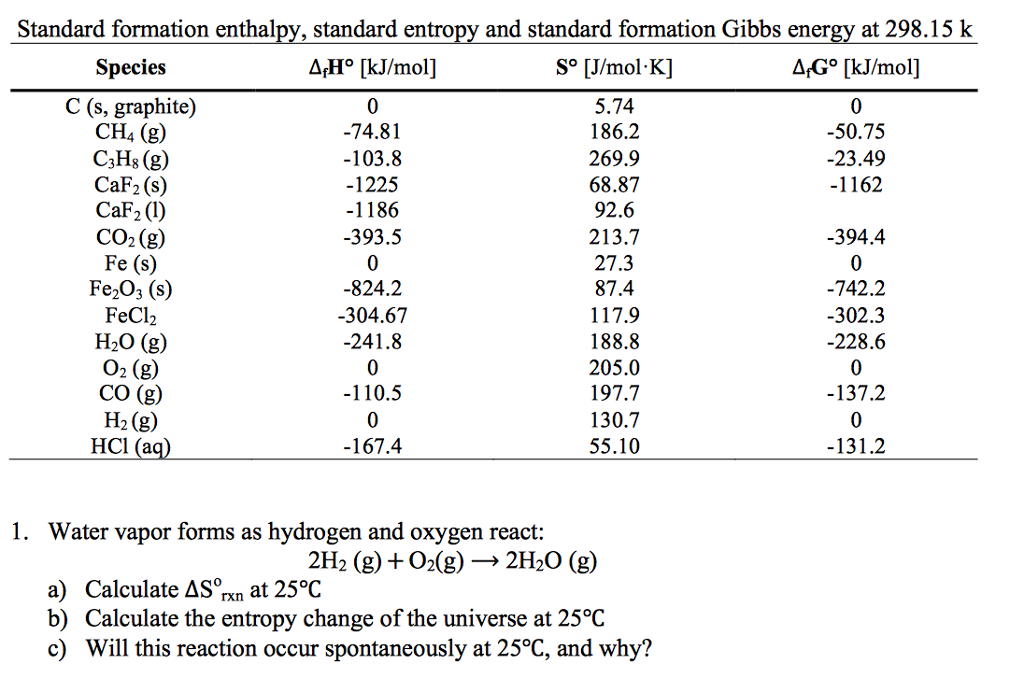

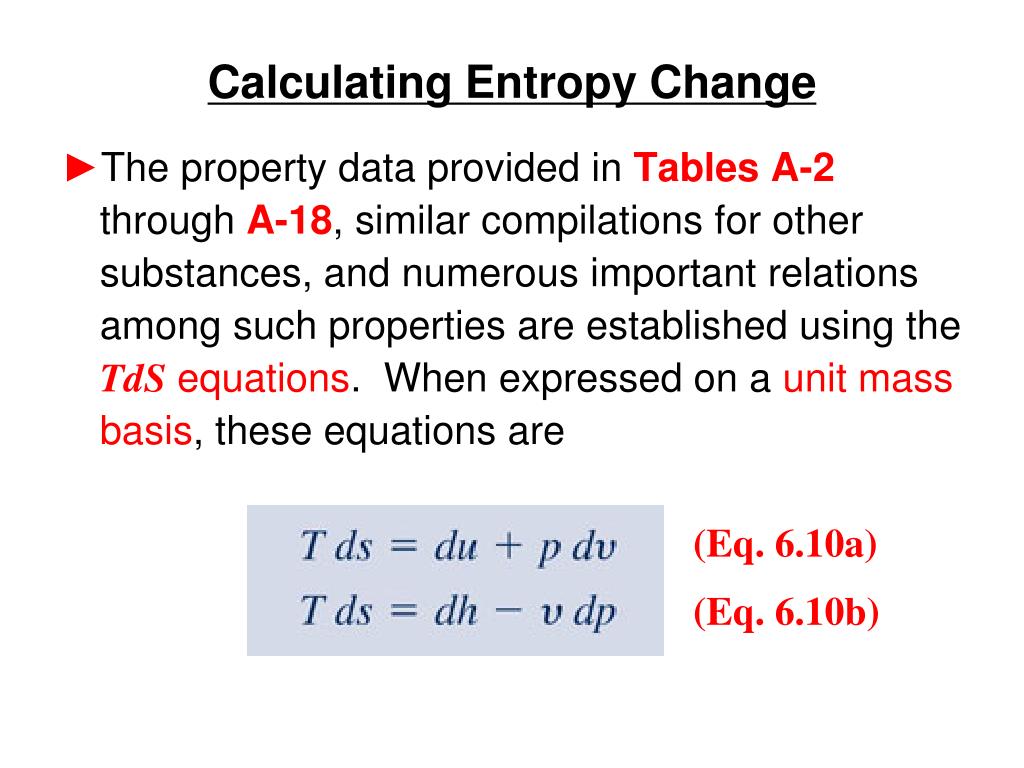

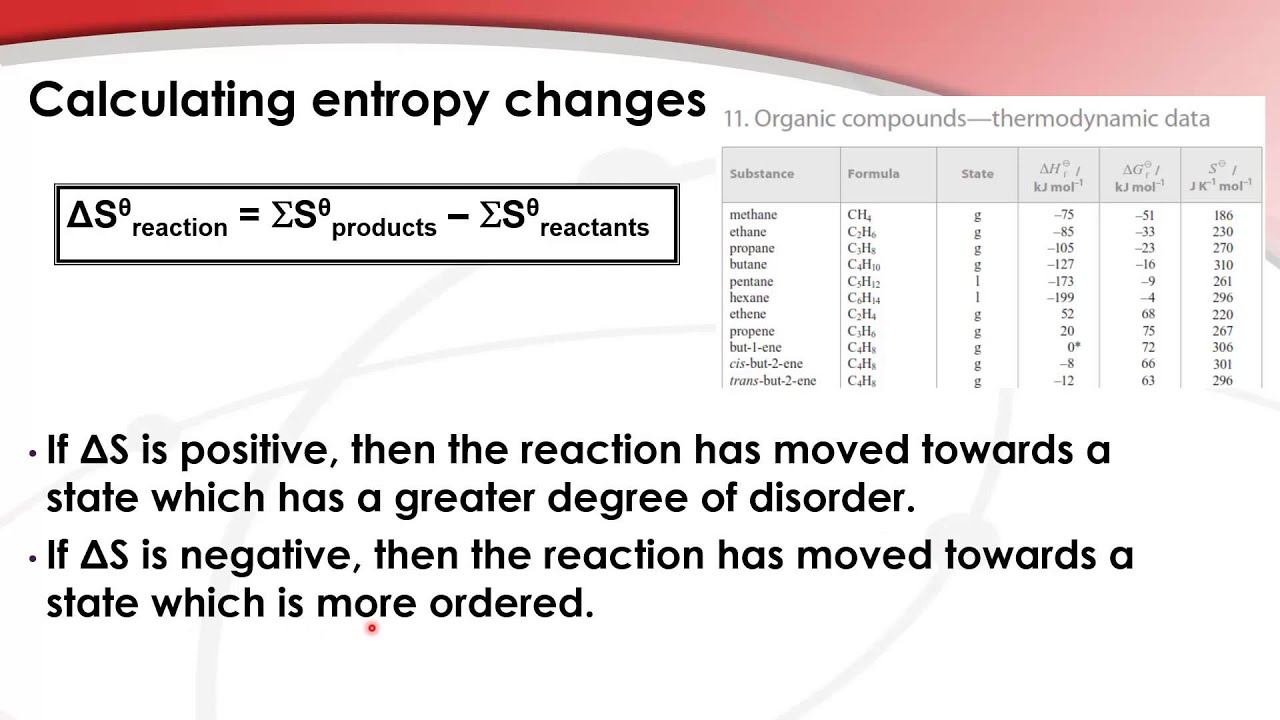

dissipative energy use, of a thermodynamic system or working body of chemical species during a change of state. The concept of entropy was developed in the 1850s by German physicist Rudolf Clausius who described it as the transformation-content, i.e. The first law of thermodynamics, formalized through the heat-friction experiments of James Joule in 1843, deals with the concept of energy, which is conserved in all processes the first law, however, lacks in its ability to quantify the effects of friction and dissipation. The statistical definition of entropy is the more fundamental definition, from which all other definitions and all properties of entropy follow. In terms of statistical mechanics, the entropy describes the number of the possible microscopic configurations of the system. Entropy is an extensive state function that accounts for the effects of irreversibility in thermodynamic systems. In recent years, entropy has been interpreted in terms of the " dispersal" of energy. Thus the statistical definition is usually considered the fundamental definition of entropy.Įntropy increase has often been defined as a change to a more disordered state at a molecular level. This thermodynamic definition of entropy is only valid for a system in equilibrium (because temperature is defined only for a system in equilibrium), while the statistical definition of entropy (see below) applies to any system. Entropy is one of the factors that determines the free energy of the system. Quantitatively, entropy is defined by the differential quantity dS = \delta Q/T, where \delta Q is the amount of heat absorbed in an isothermal and reversible process in which the system goes from one state to another, and T is the absolute temperature at which the process is occurring. Thus for maximum entropy there is minimum availability for conversion into work and for minimum entropy there is maximum availability for conversion into work. The increase in entropy is small when heat is added at high temperature and is greater when heat is added at lower temperature. (Note the product "TS" in the Gibbs free energy or Helmholtz free energy relations).Įntropy is a function of a quantity of heat which shows the possibility of conversion of that heat into work.

that energy which cannot be used for external work, then entropy may be (most concretely) visualized as the "scrap" or "useless" energy whose energetic prevalence over the total energy of a system is directly proportional to the absolute temperature of the considered system. that used to push a piston), and its "useless energy", i.e. When a system's energy is defined as the sum of its "useful" energy, (e.g.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed